💥 乱码的根源:当字节遇上字符

在数字世界中,一切信息最终都转化为0和1。当我们用Python处理文本时,实际上是在进行编码(将字符转换为字节)和解码(将字节转换为字符)的操作。这个过程中最常见的错误就是:

UnicodeDecodeError: 'gbk' codec can't decode byte 0x80 in position 2: illegal multibyte sequence

这个错误的本质是编码不一致。你的文件用一种编码保存,但用另一种编码读取。让我们从最基础的原理开始:

"""

编码的基本原理演示

展示字符如何转换为字节,以及编码不一致导致的乱码

"""

def demonstrate_encoding_basics():

"""演示编码的基本原理"""

# 1. 字符串在内存中的表示

text = "你好,Python编码!"

print("原始字符串:", text)

# 2. 使用不同编码转换为字节

encodings = ['utf-8', 'gbk', 'utf-16', 'latin-1']

for encoding in encodings:

try:

byte_data = text.encode(encoding)

print(f"\\n编码: {encoding}")

print(f"字节长度: {len(byte_data)}")

print(f"字节表示: {byte_data}")

print(f"十六进制: {byte_data.hex()}")

except UnicodeEncodeError as e:

print(f"\\n编码 {encoding} 失败: {e}")

# 3. 解码演示

print("\\n" + "="*50)

print("解码演示:")

# 用UTF-8编码

utf8_bytes = text.encode('utf-8')

# 尝试用不同编码解码

for encoding in ['utf-8', 'gbk', 'latin-1']:

try:

decoded = utf8_bytes.decode(encoding)

print(f"用 {encoding} 解码: {decoded}")

except UnicodeDecodeError:

print(f"用 {encoding} 解码失败: 编码不兼容")

if __name__ == "__main__":

demonstrate_encoding_basics()

运行这段代码,你会发现同一个字符串用不同编码转换得到的字节序列完全不同。这就是乱码的根源:字节序列是正确的,但解码时使用了错误的编码表。

🎯 Python文件编码完全解决方案

1. 统一编码的黄金法则

"""

统一编码的核心代码

所有文件操作都必须显式指定编码

"""

import csv

import os

def create_files_with_unified_encoding():

"""创建编码一致的文件"""

# 数据示例

data = [

["ID", "姓名", "年龄", "城市"],

[1, "张三", 25, "北京"],

[2, "李四", 30, "上海"],

[3, "王五", 28, "广州"]

]

# 🎯 关键:总是显式指定编码!

# 情况1:保存为CSV格式

with open('data_utf8.csv', 'w', encoding='utf-8', newline='') as f:

writer = csv.writer(f)

writer.writerows(data)

# 情况2:保存为TXT格式

with open('data_utf8.txt', 'w', encoding='utf-8') as f:

for row in data:

f.write(','.join(str(x) for x in row) + '\\n')

# 情况3:保存为带BOM的UTF-8(Windows Excel友好)

with open('data_utf8_bom.csv', 'w', encoding='utf-8-sig', newline='') as f:

writer = csv.writer(f)

writer.writerows(data)

# 验证文件

print("✅ 文件创建完成,编码验证:")

files = ['data_utf8.csv', 'data_utf8.txt', 'data_utf8_bom.csv']

for filename in files:

try:

with open(filename, 'rb') as f:

first_bytes = f.read(3)

if first_bytes == b'\\xef\\xbb\\xbf':

encoding = 'UTF-8-SIG (带BOM)'

else:

# 尝试用UTF-8读取

with open(filename, 'r', encoding='utf-8') as f2:

f2.read()

encoding = 'UTF-8 (无BOM)'

print(f" 📁 {filename:20} -> {encoding}")

except Exception as e:

print(f" ❌ {filename:20} -> 读取失败: {e}")

return files

# 清理和测试

if __name__ == "__main__":

# 清理旧文件

for f in ['data_utf8.csv', 'data_utf8.txt', 'data_utf8_bom.csv']:

if os.path.exists(f):

os.remove(f)

files = create_files_with_unified_encoding()

print("\\n✅ 所有文件使用统一编码创建成功!")

2. 自动编码检测与转换工具

"""

智能编码检测与转换

自动识别文件编码并转换为目标编码

"""

import os

from typing import Optional, Tuple

class EncodingConverter:

"""编码转换器"""

def __init__(self):

self.supported_encodings = [

'utf-8', 'utf-8-sig', 'utf-16', 'utf-16le', 'utf-16be',

'gbk', 'gb2312', 'gb18030', # 中文编码

'big5', # 繁体中文

'shift_jis', 'euc-jp', # 日文

'euc-kr', # 韩文

'latin-1', 'cp1252', 'iso-8859-1' # 西欧

]

def detect_encoding(self, filename: str, sample_size: int = 1000) –> Tuple[str, float]:

"""

检测文件编码

参数:

filename: 文件名

sample_size: 采样大小(字节)

返回:

(编码名称, 置信度)

"""

if not os.path.exists(filename):

raise FileNotFoundError(f"文件不存在: {filename}")

with open(filename, 'rb') as f:

raw_data = f.read(sample_size)

if not raw_data:

return 'utf-8', 0.0

# 检查BOM(字节顺序标记)

if raw_data.startswith(b'\\xef\\xbb\\xbf'):

return 'utf-8-sig', 1.0

elif raw_data.startswith(b'\\xff\\xfe'):

return 'utf-16le', 1.0

elif raw_data.startswith(b'\\xfe\\xff'):

return 'utf-16be', 1.0

# 尝试常见编码

encodings_to_try = ['utf-8', 'gbk', 'gb2312', 'gb18030', 'latin-1', 'cp1252']

for enc in encodings_to_try:

try:

# 尝试解码

raw_data.decode(enc)

# 如果可以解码,再尝试完整解码确认

with open(filename, 'r', encoding=enc, errors='strict') as f:

f.read(1024)

return enc, 0.9

except UnicodeDecodeError:

continue

except:

pass

# 默认返回UTF-8

return 'utf-8', 0.5

def convert_encoding(self, source_file: str, target_file: str,

target_encoding: str = 'utf-8',

source_encoding: Optional[str] = None) –> bool:

"""

转换文件编码

参数:

source_file: 源文件

target_file: 目标文件

target_encoding: 目标编码

source_encoding: 源编码(None则自动检测)

返回:

是否成功

"""

try:

# 1. 检测源文件编码

if source_encoding is None:

source_encoding, confidence = self.detect_encoding(source_file)

print(f"🔍 检测到编码: {source_encoding} (置信度: {confidence:.1%})")

# 2. 读取源文件

with open(source_file, 'r', encoding=source_encoding, errors='replace') as f:

content = f.read()

# 3. 写入目标文件

with open(target_file, 'w', encoding=target_encoding) as f:

f.write(content)

print(f"✅ 转换成功: {source_file} -> {target_file}")

print(f" 源编码: {source_encoding} -> 目标编码: {target_encoding}")

return True

except UnicodeDecodeError as e:

print(f"❌ 解码失败: {e}")

print("💡 尝试使用不同编码:")

# 尝试其他编码

for enc in ['gbk', 'gb2312', 'gb18030', 'latin-1', 'cp1252', 'utf-8']:

if enc == source_encoding:

continue

try:

with open(source_file, 'r', encoding=enc, errors='replace') as f:

content = f.read()

with open(target_file, 'w', encoding=target_encoding) as f:

f.write(content)

print(f"✅ 使用 {enc} 编码成功转换")

return True

except Exception as e2:

print(f" 尝试 {enc} 失败: {e2}")

continue

print("❌ 所有编码尝试都失败")

return False

except Exception as e:

print(f"❌ 转换失败: {e}")

return False

def batch_convert(self, file_patterns: list, target_encoding: str = 'utf-8'):

"""

批量转换文件编码

参数:

file_patterns: 文件模式列表

target_encoding: 目标编码

"""

import glob

for pattern in file_patterns:

files = glob.glob(pattern)

for filepath in files:

if os.path.isfile(filepath):

print(f"\\n📁 处理文件: {filepath}")

# 创建新文件名

dirname, basename = os.path.split(filepath)

name, ext = os.path.splitext(basename)

new_basename = f"{name}_{target_encoding}{ext}"

new_path = os.path.join(dirname, new_basename)

# 转换编码

self.convert_encoding(filepath, new_path, target_encoding)

# 使用示例

if __name__ == "__main__":

converter = EncodingConverter()

# 创建测试文件

test_content = "中文测试文件,包含特殊字符:★☆✔✘→←↑↓"

test_content_eng = "English test file with special characters: ★☆✔✘→←↑↓"

print("🔄 创建测试文件…")

# 用不同编码保存测试文件

test_files = []

# UTF-8文件

filename = "test_utf8.txt"

with open(filename, 'w', encoding='utf-8') as f:

f.write(test_content)

test_files.append(filename)

print(f"✅ 创建测试文件: {filename} (utf-8)")

# UTF-8 with BOM

filename = "test_utf8_bom.txt"

with open(filename, 'w', encoding='utf-8-sig') as f:

f.write(test_content)

test_files.append(filename)

print(f"✅ 创建测试文件: {filename} (utf-8-sig)")

# GBK文件(中文编码)

try:

filename = "test_gbk.txt"

with open(filename, 'w', encoding='gbk') as f:

f.write(test_content)

test_files.append(filename)

print(f"✅ 创建测试文件: {filename} (gbk)")

except:

print(f"⚠️ 无法创建 GBK 文件,可能系统不支持")

print(f"\\n📊 共创建 {len(test_files)} 个测试文件")

# 检测每个文件的编码

print("\\n🔍 检测文件编码:")

for test_file in test_files:

if os.path.exists(test_file):

encoding, confidence = converter.detect_encoding(test_file)

print(f" {test_file}: {encoding} ({confidence:.1%})")

# 测试单个文件转换

print("\\n🔄 测试单个文件转换:")

if test_files:

source_file = test_files[0]

target_file = "converted_utf8.txt"

if converter.convert_encoding(source_file, target_file, 'utf-8'):

print(f"✅ 转换完成,目标文件: {target_file}")

# 显示转换后的内容

try:

with open(target_file, 'r', encoding='utf-8') as f:

content = f.read()

print(f"📝 转换后内容: {content[:50]}…")

except:

print("⚠️ 无法读取转换后的文件")

# 清理临时文件

if os.path.exists(target_file):

os.remove(target_file)

print(f"🗑️ 已清理临时文件: {target_file}")

# 清理测试文件

print("\\n🗑️ 清理测试文件…")

for test_file in test_files:

if os.path.exists(test_file):

os.remove(test_file)

print(f" 已删除: {test_file}")

print("\\n✨ 所有测试完成!")

# 显示使用说明

print("\\n📖 使用说明:")

print("1. 初始化编码转换器:")

print(" converter = EncodingConverter()")

print("")

print("2. 检测文件编码:")

print(" encoding, confidence = converter.detect_encoding('filename.txt')")

print("")

print("3. 转换单个文件:")

print(" converter.convert_encoding('source.txt', 'target.txt', 'utf-8')")

print("")

print("4. 批量转换:")

print(" converter.batch_convert(['*.txt', '*.csv'], 'utf-8')")

3. 上下文管理器确保编码一致

"""

安全的文件操作上下文管理器

确保读写操作使用正确的编码

"""

import csv

from typing import Union, Optional, IO, Any, List

from pathlib import Path

import os

class SafeFileOpener:

"""

安全的文件操作上下文管理器

自动处理编码问题,确保读写一致性

"""

def __init__(self,

filename: Union[str, Path],

mode: str = 'r',

encoding: Optional[str] = None,

detect_encoding: bool = False,

fallback_encoding: str = 'utf-8'):

"""

初始化文件打开器

参数:

filename: 文件名

mode: 打开模式

encoding: 指定编码

detect_encoding: 是否自动检测编码

fallback_encoding: 备用编码

"""

self.filename = str(filename)

self.mode = mode

self.requested_encoding = encoding

self.detect_encoding = detect_encoding

self.fallback_encoding = fallback_encoding

self.file = None

def _determine_encoding(self) –> str:

"""确定使用的编码"""

# 1. 如果指定了编码,使用指定的

if self.requested_encoding:

return self.requested_encoding

# 2. 如果是写入模式,使用utf-8

if 'w' in self.mode or 'a' in self.mode:

return 'utf-8'

# 3. 如果是读取模式,检测编码

if self.detect_encoding and os.path.exists(self.filename):

try:

with open(self.filename, 'rb') as f:

raw = f.read(1024) # 读取更多字节以提高检测精度

# 检查BOM

if raw.startswith(b'\\xef\\xbb\\xbf'):

return 'utf-8-sig'

elif raw.startswith(b'\\xff\\xfe'):

return 'utf-16le'

elif raw.startswith(b'\\xfe\\xff'):

return 'utf-16be'

# 尝试检测常见编码

try:

raw.decode('utf-8')

return 'utf-8'

except UnicodeDecodeError:

pass

try:

raw.decode('gbk')

return 'gbk'

except UnicodeDecodeError:

pass

try:

raw.decode('latin-1')

return 'latin-1'

except UnicodeDecodeError:

pass

except Exception:

pass

# 4. 默认使用utf-8

return self.fallback_encoding

def __enter__(self) –> IO:

"""进入上下文"""

encoding = self._determine_encoding()

try:

# 对于CSV文件,需要特殊处理newline参数

if self.filename.endswith(('.csv', '.tsv')):

self.file = open(self.filename, self.mode,

encoding=encoding,

newline='')

else:

self.file = open(self.filename, self.mode,

encoding=encoding)

except UnicodeDecodeError as e:

# 如果解码失败,尝试使用备选编码

if encoding != self.fallback_encoding:

print(f"⚠️ 编码 {encoding} 解码失败,尝试使用 {self.fallback_encoding}")

encoding = self.fallback_encoding

if self.filename.endswith(('.csv', '.tsv')):

self.file = open(self.filename, self.mode,

encoding=encoding,

newline='')

else:

self.file = open(self.filename, self.mode,

encoding=encoding)

else:

raise

# 存储实际使用的编码

self.actual_encoding = encoding

if 'w' in self.mode or 'a' in self.mode:

print(f"📝 写入文件: {self.filename} (编码: {encoding})")

else:

print(f"📖 读取文件: {self.filename} (编码: {encoding})")

return self.file

def __exit__(self, exc_type, exc_val, exc_tb):

"""退出上下文"""

if self.file:

self.file.close()

if exc_type is None:

print(f"✅ 文件操作完成: {self.filename}")

else:

print(f"❌ 文件操作异常: {self.filename} – {exc_val}")

# 返回False让异常继续传播

return False

def read_file_safely(filename: str, **kwargs) –> str:

"""安全读取文件"""

with SafeFileOpener(filename, 'r', **kwargs) as f:

return f.read()

def write_file_safely(filename: str, content: str, **kwargs):

"""安全写入文件"""

with SafeFileOpener(filename, 'w', **kwargs) as f:

f.write(content)

def write_csv_safely(filename: str, data: List[List[Any]], headers: List[str] = None, **kwargs):

"""安全写入CSV文件"""

with SafeFileOpener(filename, 'w', **kwargs) as f:

writer = csv.writer(f)

if headers:

writer.writerow(headers)

writer.writerows(data)

def read_csv_safely(filename: str, **kwargs) –> List[List[str]]:

"""安全读取CSV文件"""

with SafeFileOpener(filename, 'r', **kwargs) as f:

reader = csv.reader(f)

return list(reader)

class EncodingConverter:

"""编码转换器"""

@staticmethod

def convert_encoding(input_file: str, output_file: str,

target_encoding: str = 'utf-8',

source_encoding: Optional[str] = None):

"""

转换文件编码

参数:

input_file: 输入文件

output_file: 输出文件

target_encoding: 目标编码

source_encoding: 源编码(None则自动检测)

"""

try:

# 读取源文件

with SafeFileOpener(input_file, 'r',

encoding=source_encoding,

detect_encoding=True) as f:

content = f.read()

# 写入目标文件

with SafeFileOpener(output_file, 'w',

encoding=target_encoding) as f:

f.write(content)

print(f"🔄 转换完成: {input_file} -> {output_file}")

print(f" 源编码: {f.actual_encoding if hasattr(f, 'actual_encoding') else 'unknown'}")

print(f" 目标编码: {target_encoding}")

except Exception as e:

print(f"❌ 转换失败 {input_file}: {e}")

# 使用示例

if __name__ == "__main__":

# 1. 安全写入文件

data = [

["项目", "价格", "数量"],

["苹果", 5.5, 10],

["香蕉", 3.2, 20],

["橙子", 4.8, 15]

]

# 写入不同编码的文件

print("📤 写入不同编码的文件:")

write_csv_safely('data_utf8.csv', data, encoding='utf-8')

write_csv_safely('data_gbk.csv', data, encoding='gbk')

write_csv_safely('data_utf8_sig.csv', data, encoding='utf-8-sig')

# 2. 安全读取文件(指定编码)

print("\\n📥 读取并验证文件(指定编码):")

for filename, encoding in [

('data_utf8.csv', 'utf-8'),

('data_gbk.csv', 'gbk'),

('data_utf8_sig.csv', 'utf-8-sig')

]:

try:

content = read_file_safely(filename, encoding=encoding)

print(f"✅ 成功读取: {filename} ({len(content)} 字符)")

except Exception as e:

print(f"❌ 读取失败 {filename}: {e}")

# 3. 安全读取文件(自动检测编码)

print("\\n📥 读取并验证文件(自动检测编码):")

for filename in ['data_utf8.csv', 'data_gbk.csv', 'data_utf8_sig.csv']:

try:

content = read_file_safely(filename, detect_encoding=True)

print(f"✅ 成功读取: {filename} ({len(content)} 字符)")

except Exception as e:

print(f"❌ 读取失败 {filename}: {e}")

# 4. 批量转换编码

print("\\n🔄 批量转换编码:")

converter = EncodingConverter()

for filename in ['data_utf8.csv', 'data_gbk.csv', 'data_utf8_sig.csv']:

if os.path.exists(filename):

new_name = filename.replace('.csv', '_converted.csv')

converter.convert_encoding(filename, new_name, 'utf-8')

# 5. 读取转换后的文件

print("\\n📥 验证转换后的文件:")

for filename in ['data_utf8_converted.csv',

'data_gbk_converted.csv',

'data_utf8_sig_converted.csv']:

if os.path.exists(filename):

try:

rows = read_csv_safely(filename)

print(f"✅ {filename}: {len(rows)} 行")

except Exception as e:

print(f"❌ 读取失败 {filename}: {e}")

# 清理

print("\\n🧹 清理临时文件…")

for f in ['data_utf8.csv', 'data_gbk.csv', 'data_utf8_sig.csv',

'data_utf8_converted.csv', 'data_gbk_converted.csv', 'data_utf8_sig_converted.csv']:

if os.path.exists(f):

os.remove(f)

print(f"🗑️ 已删除: {f}")

4. 修复原问题的完整解决方案

"""

解决原问题:生成WC-Co数据并确保编码一致

这是对原代码的完整修复

"""

import csv

import random

from datetime import datetime

def generate_wc_data_with_fixed_encoding():

"""

生成WC-Co数据,修复编码问题

原问题:两个文件编码不一致

解决方案:显式指定编码参数

"""

# 定义参数范围

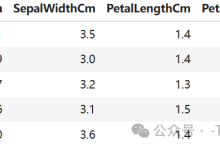

wc_range = (0.2, 0.8) # WC晶粒尺寸 (μm)

cr3c2_range = (0.1, 0.8) # Cr₃C₂含量 (%)

vc_range = (0.1, 0.7) # VC含量 (%)

co_range = (5.0, 15.0) # Co含量 (%)

hv_range = (1200, 1700) # 维氏硬度 (HV)

kic_range = (8.0, 15.0) # 断裂韧性 (MPa·m^0.5)

tres_range = (1800, 2900) # 抗弯强度 (MPa)

pos_range = (1.33, 5.0) # 孔位精度 (mm)

# 生成数据

data = []

for i in range(56):

wc = random.uniform(*wc_range)

co = random.uniform(*co_range)

# 物理相关性模型

hardness_base = 1700 – 600 * (wc – 0.2) / 0.6

hardness = hardness_base – 20 * (co – 5) / 10

toughness = 8 + 7 * (co – 5) / 10 – 2 * (wc – 0.2) / 0.6

strength = 1800 + 1100 * (0.8 – wc) / 0.6 – 100 * (co – 5) / 10

cr3c2 = random.uniform(*cr3c2_range)

vc = random.uniform(*vc_range)

hardness += 50 * (cr3c2 + vc) / 1.5

toughness -= 1.5 * (cr3c2 + vc) / 1.5

accuracy = random.uniform(*pos_range)

# 添加噪声

hardness *= random.uniform(0.95, 1.05)

toughness *= random.uniform(0.95, 1.05)

strength *= random.uniform(0.95, 1.05)

accuracy *= random.uniform(0.95, 1.05)

# 确保在范围内

wc = round(max(wc_range[0], min(wc_range[1], wc)), 2)

cr3c2 = round(max(cr3c2_range[0], min(cr3c2_range[1], cr3c2)), 2)

vc = round(max(vc_range[0], min(vc_range[1], vc)), 2)

co = round(max(co_range[0], min(co_range[1], co)), 2)

hardness = int(max(hv_range[0], min(hv_range[1], hardness)))

toughness = round(max(kic_range[0], min(kic_range[1], toughness)), 2)

strength = int(max(tres_range[0], min(tres_range[1], strength)))

accuracy = round(max(pos_range[0], min(pos_range[1], accuracy)), 4)

data.append([wc, cr3c2, vc, co, hardness, toughness, strength, accuracy])

# 🎯 关键修复:显式指定编码!

# 使用utf-8-sig确保Windows Excel兼容性

encoding_to_use = 'utf-8-sig'

# 保存带表头的文件

header_file = 'wc_co_data_fixed.csv'

with open(header_file, 'w', encoding=encoding_to_use, newline='') as f:

writer = csv.writer(f)

writer.writerow(['WC晶粒尺寸(μm)', 'Cr3C2含量(%)', 'VC含量(%)', 'Co含量(%)',

'维氏硬度(HV)', '断裂韧性(MPa·m^0.5)',

'抗弯强度(MPa)', '孔位精度(mm)'])

writer.writerows(data)

# 保存纯数据版本

raw_file = 'wc_co_data_raw_fixed.csv'

with open(raw_file, 'w', encoding=encoding_to_use, newline='') as f:

for row in data:

f.write(','.join(str(x) for x in row) + '\\n')

# 验证编码

print("="*60)

print("🔧 编码问题修复验证")

print("="*60)

for filename in [header_file, raw_file]:

with open(filename, 'rb') as f:

first_bytes = f.read(3)

if first_bytes == b'\\xef\\xbb\\xbf':

encoding = "UTF-8 with BOM (Excel兼容)"

else:

encoding = "未知(可能无BOM)"

with open(filename, 'r', encoding='utf-8-sig') as f:

lines = f.readlines()

print(f"\\n📁 文件: {filename}")

print(f" 编码: {encoding}")

print(f" 行数: {len(lines)}")

print(f" 大小: {os.path.getsize(filename)} 字节")

print(f" 示例: {lines[0][:50]}…" if lines[0] else " 示例: (空文件)")

print("\\n" + "="*60)

print("✅ 修复完成!两个文件现在使用相同的编码")

print(" 📄 wc_co_data_fixed.csv – 带表头版本")

print(" 📄 wc_co_data_raw_fixed.csv – 纯数据版本")

print(f" 🔤 统一编码: {encoding_to_use}")

print("="*60)

return data, header_file, raw_file

# 运行示例

if __name__ == "__main__":

import os

# 清理可能存在的旧文件

for f in ['wc_co_data_fixed.csv', 'wc_co_data_raw_fixed.csv']:

if os.path.exists(f):

os.remove(f)

# 生成数据

data, header_file, raw_file = generate_wc_data_with_fixed_encoding()

# 显示前5行数据

print("\\n📊 生成的数据示例(前5行):")

print("-"*100)

print("WC(μm) | Cr3C2(%) | VC(%) | Co(%) | 硬度(HV) | 韧性(MPa·√m) | 强度(MPa) | 精度(mm)")

print("-"*100)

for i in range(min(5, len(data))):

row = data[i]

print(f"{row[0]:7.2f} | {row[1]:8.2f} | {row[2]:5.2f} | {row[3]:5.1f} | "

f"{row[4]:8d} | {row[5]:12.2f} | {row[6]:8d} | {row[7]:7.4f}")

🎪 编码选择决策指南

理解不同编码的适用场景是解决编码问题的关键。每个编码方案都有其特定的使用场景:

"""

编码选择智能决策系统

根据需求自动选择最佳编码

"""

def encoding_decision_system(requirements: dict) –> str:

"""

根据需求选择最佳编码

参数:

requirements: 需求字典,包含:

– platform: 目标平台 ('windows', 'linux', 'mac', 'web', 'all')

– contains_chinese: 是否包含中文

– excel_compatible: 是否需要Excel兼容

– file_size_matters: 文件大小是否重要

– legacy_system: 是否用于旧系统

返回:

推荐的编码

"""

encoding_rules = [

{

'condition': lambda r: r.get('excel_compatible', False) and r.get('platform') in ['windows', 'all'],

'encoding': 'utf-8-sig',

'reason': 'Excel在Windows上需要BOM来正确识别UTF-8'

},

{

'condition': lambda r: r.get('platform') == 'web' or r.get('platform') == 'all',

'encoding': 'utf-8',

'reason': 'Web标准,跨平台兼容性最好'

},

{

'condition': lambda r: r.get('contains_chinese', True) and r.get('legacy_system', False),

'encoding': 'gbk',

'reason': '旧版中文Windows系统兼容'

},

{

'condition': lambda r: r.get('file_size_matters', False),

'encoding': 'utf-8',

'reason': '无BOM,文件更小'

},

{

'condition': lambda r: r.get('contains_chinese', False) and not r.get('legacy_system', False),

'encoding': 'utf-8',

'reason': '现代中文应用标准'

}

]

# 应用规则

for rule in encoding_rules:

if rulerequirements:

return rule['encoding'], rule['reason']

# 默认

return 'utf-8', '安全默认选择'

# 使用示例

if __name__ == "__main__":

test_cases = [

{

'name': 'Windows + Excel + 中文',

'requirements': {

'platform': 'windows',

'contains_chinese': True,

'excel_compatible': True,

'file_size_matters': False,

'legacy_system': False

}

},

{

'name': '跨平台Web应用',

'requirements': {

'platform': 'all',

'contains_chinese': True,

'excel_compatible': False,

'file_size_matters': True,

'legacy_system': False

}

},

{

'name': '旧版中文系统',

'requirements': {

'platform': 'windows',

'contains_chinese': True,

'excel_compatible': False,

'file_size_matters': False,

'legacy_system': True

}

}

]

print("🧠 编码选择决策系统")

print("="*60)

for case in test_cases:

encoding, reason = encoding_decision_system(case['requirements'])

print(f"\\n📋 场景: {case['name']}")

print(f" 需求: {case['requirements']}")

print(f" 🎯 推荐编码: {encoding}")

print(f" 💡 原因: {reason}")

📊 编码问题诊断工具

"""

编码问题诊断工具

检测和修复常见的编码问题

"""

import os

import sys

import locale

def diagnose_system_encoding():

"""诊断系统编码设置"""

print("🔧 系统编码诊断报告")

print("="*60)

# 获取系统编码信息

encoding_info = {

'系统默认编码': sys.getdefaultencoding(),

'文件系统编码': sys.getfilesystemencoding(),

'区域设置编码': locale.getpreferredencoding(),

'标准输入编码': sys.stdin.encoding,

'标准输出编码': sys.stdout.encoding,

'标准错误编码': sys.stderr.encoding,

'Python版本': sys.version,

'平台': sys.platform

}

for key, value in encoding_info.items():

print(f"{key:20}: {value}")

# 检查常见问题

print("\\n🔍 常见问题检查:")

# 1. 检查Python版本

if sys.version_info < (3, 0):

print("❌ Python 2.x 存在严重编码问题,请升级到Python 3.x")

else:

print("✅ 使用Python 3.x,编码支持良好")

# 2. 检查Windows上的编码问题

if sys.platform == 'win32':

if locale.getpreferredencoding().lower() in ['cp936', 'gbk', 'gb2312']:

print("⚠️ Windows中文系统使用GBK编码,注意与UTF-8的兼容性")

else:

print("✅ Windows系统编码设置正常")

# 3. 检查环境变量

print("\\n🌍 环境变量检查:")

env_vars = ['PYTHONIOENCODING', 'PYTHONUTF8', 'LANG', 'LC_ALL', 'LC_CTYPE']

for var in env_vars:

value = os.environ.get(var, '(未设置)')

print(f" {var:20}: {value}")

# 建议

print("\\n💡 建议:")

print(" 1. 设置环境变量: set PYTHONIOENCODING=utf-8 (Windows)")

print(" 2. 设置环境变量: export PYTHONIOENCODING=utf-8 (Linux/Mac)")

print(" 3. 在Python脚本开头添加: # -*- coding: utf-8 -*-")

print(" 4. 所有文件操作显式指定编码")

def diagnose_file(filename: str):

"""诊断特定文件的编码问题"""

if not os.path.exists(filename):

print(f"❌ 文件不存在: {filename}")

return

print(f"\\n🔍 文件编码诊断: {filename}")

print("-"*40)

# 获取文件信息

file_size = os.path.getsize(filename)

print(f"文件大小: {file_size} 字节")

# 读取前1024字节进行分析

with open(filename, 'rb') as f:

header = f.read(1024)

# 检查BOM

bom_detected = False

if header.startswith(b'\\xef\\xbb\\xbf'):

print("✅ 检测到UTF-8 BOM")

bom_detected = True

elif header.startswith(b'\\xff\\xfe'):

print("✅ 检测到UTF-16 LE BOM")

bom_detected = True

elif header.startswith(b'\\xfe\\xff'):

print("✅ 检测到UTF-16 BE BOM")

bom_detected = True

else:

print("ℹ️ 未检测到BOM标记")

# 尝试用不同编码读取

test_encodings = ['utf-8', 'utf-8-sig', 'gbk', 'gb2312',

'gb18030', 'big5', 'latin-1', 'cp1252']

print("\\n🔤 编码测试:")

for enc in test_encodings:

try:

with open(filename, 'r', encoding=enc) as f:

content = f.read(200)

# 检查是否包含乱码字符

has_garbled = any(ord(c) == 65533 for c in content) # 65533是替换字符'�'

if has_garbled:

print(f" ⚠️ {enc:12} – 可读但包含替换字符")

else:

# 检查是否包含中文字符

has_chinese = any('\\u4e00' <= c <= '\\u9fff' for c in content)

status = "✅" if not has_garbled else "⚠️ "

chinese_info = " (含中文)" if has_chinese else ""

print(f" {status} {enc:12} – 可正常读取{chinese_info}")

except UnicodeDecodeError as e:

print(f" ❌ {enc:12} – 解码失败: {str(e)[:30]}…")

except Exception as e:

print(f" ❌ {enc:12} – 错误: {e}")

# 运行诊断

if __name__ == "__main__":

diagnose_system_encoding()

# 测试文件

test_files = ['wc_co_data_fixed.csv'] if os.path.exists('wc_co_data_fixed.csv') else []

for test_file in test_files:

diagnose_file(test_file)

🎓 编码最佳实践总结

经过深入分析,我们可以总结出Python编码问题的终极解决方案:

1. 黄金法则

永远不要省略encoding参数。无论读取还是写入,都必须显式指定编码:

# ❌ 错误:依赖系统默认编码

with open('file.txt', 'w') as f:

f.write("内容")

# ✅ 正确:显式指定编码

with open('file.txt', 'w', encoding='utf-8') as f:

f.write("内容")

2. 编码选择策略

- 新项目:一律使用 encoding='utf-8'

- 需要Excel兼容:使用 encoding='utf-8-sig'

- 旧系统维护:保持原有编码,但要显式指定

- 不确定时:使用 encoding='utf-8' 并配合错误处理

3. 完整的解决方案代码

"""

Python编码问题终极解决方案

封装所有最佳实践

"""

def safe_file_operation(filename: str, mode: str = 'r',

content: str = None,

encoding: str = 'utf-8',

**kwargs):

"""

安全的文件操作函数

参数:

filename: 文件名

mode: 文件模式 ('r', 'w', 'a', 'rb', 'wb'等)

content: 写入的内容(如果是写入模式)

encoding: 编码(文本模式有效)

**kwargs: 其他open()参数

返回:

读取的内容(读取模式)或None(写入模式)

"""

# 确定是否使用文本模式

is_text_mode = 'b' not in mode

# 准备参数

open_kwargs = kwargs.copy()

if is_text_mode:

open_kwargs['encoding'] = encoding

try:

with open(filename, mode, **open_kwargs) as f:

if 'r' in mode and is_text_mode:

return f.read()

elif 'w' in mode or 'a' in mode:

if content is not None and is_text_mode:

f.write(content)

elif 'b' in mode and 'content' in kwargs:

f.write(kwargs.get('content'))

return None

except UnicodeDecodeError as e:

# 编码错误,尝试常见编码

if is_text_mode and 'r' in mode:

print(f"⚠️ 编码错误,尝试其他编码: {e}")

# 尝试常见编码

for alt_enc in ['utf-8-sig', 'gbk', 'gb2312', 'latin-1']:

if alt_enc == encoding:

continue

try:

with open(filename, mode, encoding=alt_enc, **kwargs) as f:

print(f"✅ 使用 {alt_enc} 编码成功")

return f.read()

except:

continue

raise # 重新抛出异常

except Exception as e:

print(f"❌ 文件操作失败: {e}")

raise

# 使用示例

if __name__ == "__main__":

# 安全写入

safe_file_operation(

'safe_example.txt',

'w',

content='你好,安全编码的世界!',

encoding='utf-8'

)

# 安全读取

try:

content = safe_file_operation(

'safe_example.txt',

'r',

encoding='utf-8'

)

print(f"读取内容: {content}")

except Exception as e:

print(f"读取失败: {e}")

# 清理

import os

if os.path.exists('safe_example.txt'):

os.remove('safe_example.txt')

🎯 结论

编码问题在Python开发中看似简单,实则隐藏着许多陷阱。通过本文的深入分析和代码示例,你现在应该能够:

记住这个简单的规则:总是显式指定编码,永远不要依赖默认值。遵循这个规则,你就能彻底告别Python编码问题的困扰。

编码问题不再可怕,现在你有了解决它的完整工具箱!🚀

网硕互联帮助中心

网硕互联帮助中心

评论前必须登录!

注册